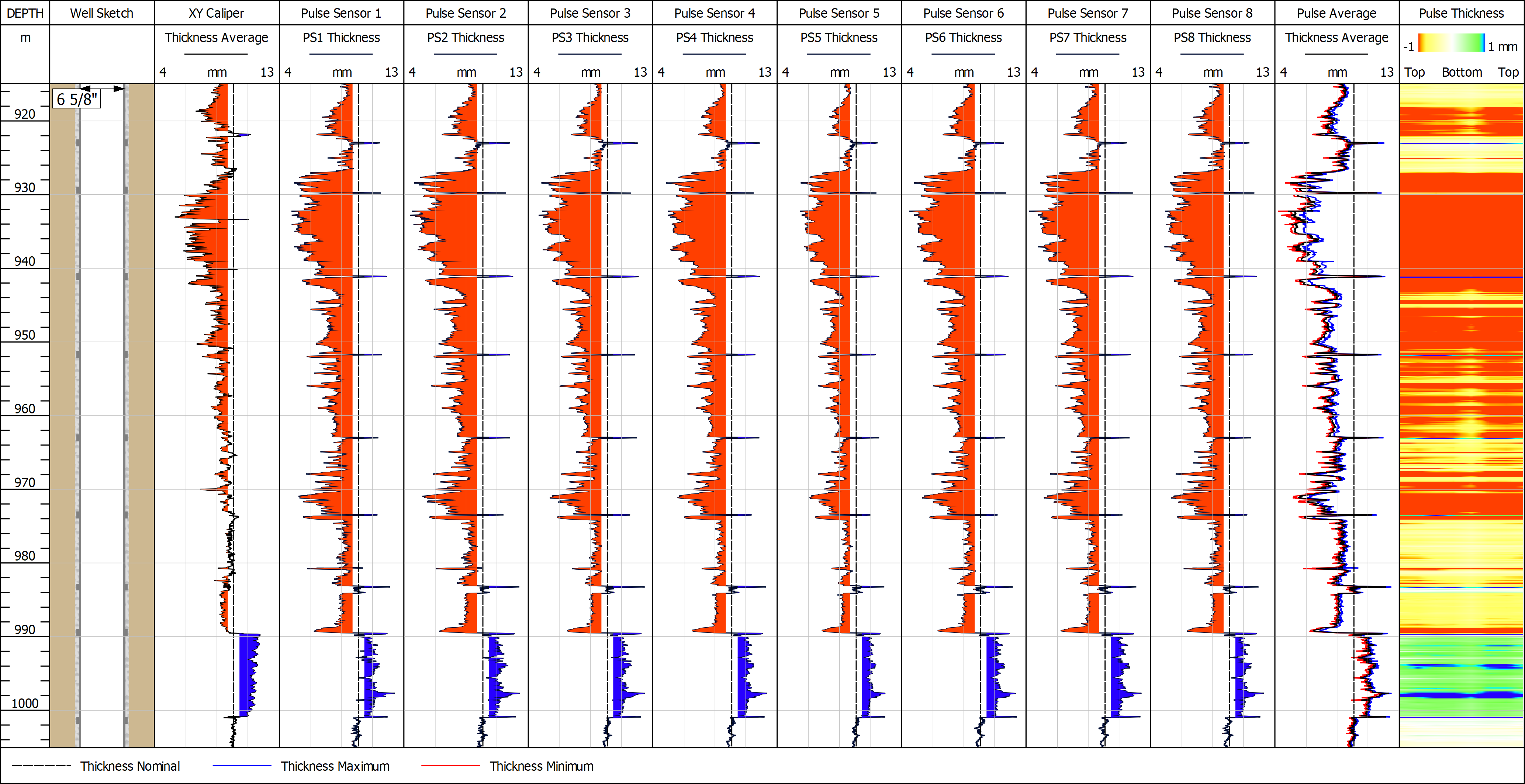

Tube inspection tends to focus on three main attributes: tube wall thickness, tube wall defects and tube geometry. And although tube geometry or profiling is important, wall thickness and defect sensing are typically the two main objectives from an integrity perspective.

With these applications in mind, the industry has developed a number of diagnostic technologies and methods aimed at tracking the condition of primary tubulars. Each has its strengths and drawbacks in terms of accuracy, resolution, coverage, efficiency and cost, when measured against their ability to assess wall thickness, defects and geometry. In a recent industry survey of 100 well integrity management professionals conducted by TGT, mechanical “multifinger” calipers were identified as the most prolific diagnostic method used to evaluate production tubing. For production casing, electromagnetic and ultrasound techniques were the most popular, but calipers were still prominent.

Mechanical calipers offer a broad mix of attributes that make them suitable for tube diagnostics. They are widely available to suit all sizes of production tubing and casing, they are relatively inexpensive and easy to deploy, and can provide comprehensive assessment in all three areas of wall thickness, defect sensing and tube geometry. However, calipers have several application-specific drawbacks, mainly in terms of accuracy in determining actual wall thickness in some scenarios, and sensing small defects.

According to the industry survey of integrity managers, the most important attributes experts consider when selecting diagnostic methods to evaluate production tubing are: the accuracy and sectorial coverage of wall thickness measurements, and the completeness and resolution of defect sensing. Geometry assessment is a lesser priority. Furthermore, the experts required a wall thickness accuracy of at least ±3% and a defect resolution of approximately 3 mm.

To track tube wall thickness, calipers measure internal diameter (ID) and estimate thickness by assuming a nominal outside diameter (OD). Variations in the actual OD or external corrosion, both invisible to calipers, can invalidate the thickness value. Also, scale or wax deposits on the inner surface can mask internal defects and lead to further false thickness computations. And while the accuracy of caliper ID measurements is approximately ±5% (±0.5 mm), the total system error for wall thickness can reduce to ±10% (±1 mm), or worse if there is scale or external corrosion. This accuracy is far below the ±3% level currently required by integrity managers.

For defect sensing, calipers offer highly precise radial measurements via 24, 40 or 60 fingers spaced azimuthally around the inner tube surface. Thin, 1.6 mm fingertips can sense the smallest defects provided the defect lies in the path of the finger passing over it. Practically, there are gaps between fingers that vary according to tube size and finger density. For example, the gap between 24 fingers in 3-1/2 inch, tubing is about 7 mm. This means that the fingers only sense about 10%-30% of the inner wall surface and it is possible for small defects or holes to pass undetected between fingers.